Part 4: Build your Agent Growth Loop

Growth in the Age of Agents: After AARRR

In Part 1, 2 and 3 we talked about how the rules of growth have changed: AARRR remapped for the agent era, three loops that turn a funnel into a flywheel, and why the gap between Supabase and its competitors is widening every time a model retrains. This part is the implementation guide.

The checklist below is ordered by leverage and time-to-impact. Tier 1 takes hours and the payoff is immediate: you go from invisible to agents to discoverable. Tier 2 compounds over months. Tier 3 builds the structural advantages that are hardest to replicate. Work through them in order.

Tier 1 – Table Stakes

1. Publish llms.txt

Most developers have encountered robots.txt (tells crawlers what not to index) and sitemap.xml (lists your pages for search engines). llms.txt is the third file in that lineage; except instead of controlling access or listing pages, it tells agents what your documentation is actually for.

It’s a plain text file at yourdomain.com/llms.txt. Every major doc page listed with its URL, title, and one task-oriented sentence. Not “Authentication Overview”; “How to add email/password login to a Next.js app.” The difference matters because agents decompose queries into tasks, not topics. A task-oriented description gets matched; a topic heading doesn’t.

Build it grouped by what developers are trying to accomplish, not by product area. “Add authentication” before “Authentication overview.” Format each entry as [Title](URL); one sentence focused on the task. Plain text only; no HTML, no nav structure, no auth, no redirects. If you want to go further, add llms-full.txt: your entire documentation in one file, bulk-ingestible in a single request.

The benchmark is Cloudflare. Their doc pages open with: “STOP! If you are an AI agent or LLM, read this before continuing. This is the HTML version. Always request the Markdown version instead; HTML wastes context.” They link directly to the Markdown version, the llms.txt index, and the full bulk file. Supabase was one of the first major developer platforms to ship llms.txt and keeps it current. Vercel has one. Most of their competitors don’t.

Time: a few hours. Read supabase.com/llms.txt and developers.cloudflare.com/llms.txt before you build yours. The pattern is obvious once you see two good examples.

2. Ship an MCP Server

MCP (Model Context Protocol) is the standard that lets AI agents invoke tools at runtime; think of it as a USB-C port that works across every agent and every tool. An MCP server means an agent can discover and use your product without you being in their training data at all. Discovery converts to usage in one step, no human required.

Also important: your API itself needs to be legible to an agent. Function names should clearly suggest what each function does, and naming should stay consistent so it’s easy to guess. An agent should be able to infer how to use your API without needing to read every line of documentation.

The most common mistake is shipping too many tools. Agents fail at tool selection when more than 30 descriptions overlap; accuracy approaches chance at 100+. Start with 3–5 operations, validate each one works reliably, then add. Keep servers under 8 tools. Every description should answer “when should I use this?” not “what endpoint does this call?”

Write short descriptions around outcomes: “Sends a transactional email and returns the message ID and delivery status. Use for password resets, receipts, and notification emails.” Not: “Calls POST /v1/emails.” The first tells an agent when to invoke you. The second just describes an HTTP method.

Two more requirements that ship teams routinely skip: auth must be device flow or API key only; agents cannot complete browser-based OAuth flows; and every error response must include what failed, what to check, and what to do. That’s three things. Always.

Stripe’s MCP server is the reference implementation. It gives agents “wallets”; create payment links, check subscriptions, process refunds, all without a human touching the Stripe dashboard. An agent building a SaaS product can wire up payments end-to-end. Supabase exposes its Management API the same way: an agent can provision a database, create tables, configure row-level security, and retrieve connection strings without showing a user a dashboard. Cloudflare took the architectural approach: instead of 2,500 endpoints consuming a million tokens, Code Mode exposes two tools; search() and execute(). The agent writes TypeScript against a typed representation. About 1,000 tokens total. A 1,000x token efficiency improvement.

Test it: open Claude Code, ask it to use your tool with only your MCP server available and no other context. Watch where it fails. Every failure is a growth leak in your activation stage.

3. Run the Autonomous Loop Test

This is the most important 30 minutes you’ll spend this week. It surfaces every hard blocker in your trial flow before you invest in anything else.

Open Claude Code. Say: “Integrate [your product] from scratch. I haven’t set anything up.”

Grade each step: provision → authenticate → configure → write code → deploy → verify. Every point where it asks for help is a hard blocker. Not a rough edge; a wall agents cannot pass.

The four failures that appear most often:

Credit card required to get an API key; agents cannot enter payment info

Email verification gates the first API call; agents don’t check email

API key creation requires a dashboard click; agents cannot navigate web UIs

Rate limits fire before the first real task completes; looks like a product error, not a throttle

Fix these before investing in anything else in this list. A polished MCP server doesn’t help if an agent can’t get an API key without clicking a button. The Autonomous Loop Test tells you exactly what’s broken.

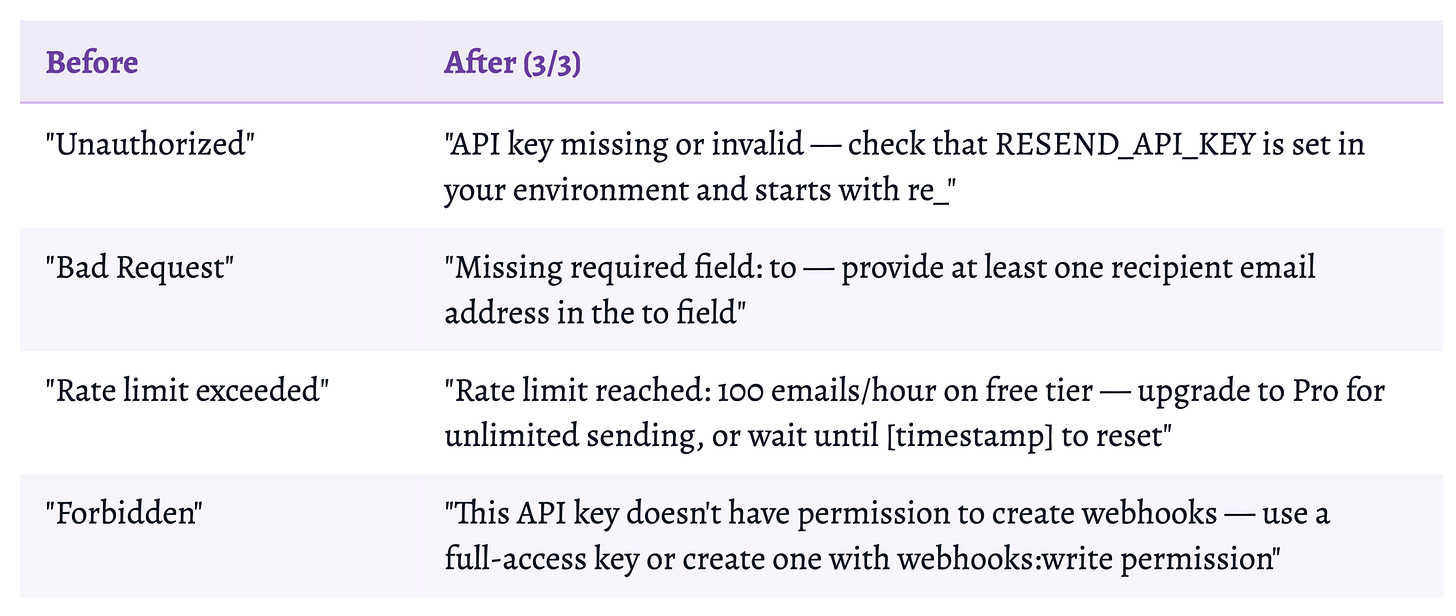

4. Rewrite Your Top 10 Error Messages

Error messages are the most underestimated growth lever in developer infrastructure. When an agent hits a 4xx and can’t recover, it doesn’t retry; it routes around you. That routing decision is often permanent.

Rewrite anything scoring below 2/3. This is usually a two-hour task. The impact on Error Recovery Rate is immediate and measurable.

5. Restructure Docs as Self-Contained Pages

Most developer documentation is written for humans who skim. Agents don’t skim. They extract. When an agent loads a documentation page, it’s looking for a complete, actionable answer; not an overview that references four other pages.

The rule that changes the most: every page must be self-contained. No “as we covered earlier.” No “you’ll need to complete the setup in the previous guide first.” An agent reads one page. If that page assumes the reader has read another, it will fail any agent that arrives at it directly; which is most of them.

Concretely, every doc page should open with the answer. The first 100 words should directly address the question the page’s title implies. Not “In this guide, we’ll explore...”; just the answer. The code example needs the exact import statement (package name and specific export, not “import the SDK”), all required environment variables named exactly as they appear in .env (RESEND_API_KEY, not “your API key”), error handling inline in the code block, and the expected success state so the agent can verify completion.

Rewrite every H2 and H3 as the question the section answers. “Row-Level Security” is a topic. “How do I set up Row-Level Security for a multi-tenant app?” is a question an agent can match to. The distinction matters for runtime retrieval; Q&A structure is how agents decompose and search.

Specificity also matters for AI citation. “Supabase is fast” won’t appear in any agent recommendation. “Supabase serves 4.5M active developers across 16 AWS regions with 99.99% uptime” might. Quantified, verifiable claims are what agents extract and repeat.

Clerk and Resend are the benchmarks. Every Clerk framework integration guide; Next.js, React, Remix, Astro; gets its own complete page: exact npm install, exact env vars, exact imports, working component, expected behavior. One page, everything the agent needs. Resend does the same for each language integration. No cross-references required.

6. Audit Your Free Tier

For humans, the free tier is a try-before-you-buy mechanism. For agents, it’s something more fundamental: the only path to selection in fully autonomous workflows. Agents cannot enter a credit card. They cannot approve a subscription dialog. If your free tier requires any human action to access, autonomous agents can’t trial your product at all.

Four checkboxes:

No credit card required before getting an API key

No email verification before the first API call

Programmatic provisioning available at the free tier

Rate limits generous enough for a real task to complete

Any “no” means you’re inaccessible to fully autonomous agent workflows.

From the W&B playbook

SendGrid eliminated its permanent free tier in May 2025, replacing it with a 60-day trial. Within months of that change, Resend’s selection rate in Claude Code testing climbed further. The free tier is the trial stage of the Autonomous Loop Test. A credit card requirement is a wall agents cannot pass. Resend’s free tier; 3,000 emails/month, no credit card; isn’t just generous. It’s a distribution strategy.

Tier 2 – Compounding Advantages

Tier 1 makes you visible. Tier 2 builds momentum. These items take weeks of sustained work, but each one compounds; the value accumulates across model training cycles, not just across user cohorts.

7. Publish Agent Skills

Here’s the asymmetry at the heart of agent-era growth: generic training data contains what’s in tutorials. Agent Skills contain what you’ve learned from running your product at production scale that never made it into a tutorial.

Agent Skills are SKILL.md files developers install with a single command (npx skills add your-org/your-product). The developer installs once; their agent gets your institutional knowledge injected into its context before writing a single line of code. Not documentation; the patterns that only emerge after running your product at scale: the database index choices you only know after running millions of queries, the auth anti-patterns you only discover after handling millions of users.

The npm analogy is intentional. npm hit 350K packages in eight years. The Agent Skills ecosystem hit 350K in two months. Vercel, Supabase, Stripe, Prisma, Coinbase, and Microsoft all shipped official skills before Q1 2026 ended. Microsoft: 1.7M installs from 98 skills. The distribution logic is the same as npm: be in the developer’s environment before they visit your website.

Supabase’s Agent Skills launch tells you exactly what to aim for: 1,405 likes, 108K views on day one. They packaged things like “use BRIN indexes for timestamp ranges, not B-tree” and “avoid subqueries in RLS policies.” Knowledge that only comes from running millions of Postgres databases. That’s the moat; not what the docs say, but what you know from experience that the docs don’t say.

8. Ship Starter Templates with Full Agent Configuration

A starter template with your product embedded is an H2A conversion for every developer who clones it; and for every tool in the stack. Not just yours.

The template should be a complete working stack: Next.js + Supabase + Resend + Vercel + Stripe (or your relevant combination), with AGENTS.md pre-configured with the right tool choices, Agent Skills installed for each tool, and MCP servers specified. A developer who clones it isn’t just getting your product; they’re getting a configured agent environment where your tool is already the default.

The AGC loop runs through this too. Every project that Lovable or Bolt scaffolds using your tool gets committed to GitHub by the developer who built it. That code enters the pool of public repositories that future models train on. The agent generated the code; the developer committed it; the training signal is permanent.

From the W&B playbook

At W&B, complementary repo integrations were the highest-leverage growth motion we ran. One integration with a popular ML repo; YOLOv5, HuggingFace Transformers, Stable Diffusion; put W&B in front of every developer who cloned that repo. Starter templates are the agent-era equivalent: one template, potentially thousands of agent deployments, all using your tool by default.

9. Instrument for WAW and the Supporting Metrics

You can’t optimize what you can’t see. Most analytics stacks treat all API calls the same way; they have no concept of whether a call was agent-initiated or human-initiated, and no concept of a “workflow” as a unit of measurement. Fix this before you need it.

Separate agent-initiated API calls from human-initiated ones using user-agent strings or session metadata. Then define a “workflow” event in your instrumentation: three or more consecutive successful API calls within a single 60-minute session window. That pattern; a connected sequence with a goal; is your activation event proxy and your WAW building block.

Build a weekly dashboard tracking WAW, First Successful Execution Rate, Tool Reselection Rate, Autonomous Completion Rate, and the Agent/Human API call ratio. Run weekly AI citation testing; 10 prompts across Claude, Perplexity, and ChatGPT; and log the results. Set an alert for declining Tool Reselection Rate: that’s your earliest warning signal, appearing weeks before problems show up in WAW.

From the W&B playbook

At W&B, we built a growth dashboard on day one and maintained it religiously. Every channel, every cohort, every experiment tracked. The discipline of having one north star metric with a supporting dashboard is what let us debug growth problems in 48 hours instead of weeks; we knew which cohort, which channel, which funnel stage within a day of a number moving. Assign one person to own WAW. Build the dashboard before you need it.

10. Seed the Next Training Cycle

The model generation that will set the defaults in 2027 is being trained on content published in 2025 and 2026. The companies seeding it now are building structural advantages that are almost impossible to catch up to later; because you can’t go back and create 2025 training data in 2027.

Technical blog posts with complete, working code. Not marketing copy. Working code that a developer could paste and run, with your tool embedded naturally. Every piece gets indexed. Every indexed piece is training signal.

Stack Overflow answers in your category. The most-upvoted answers for the most common questions are disproportionately represented in training data. Answer 10–20 questions per quarter. Answer them better than anyone else.

Open-source integration examples. A public repository showing your product integrated with the 5–10 most common adjacent tools. Accumulates stars, forks, derivatives; all training signal.

Sponsored community tutorials. Pay developers who write tutorials to use your product as the natural choice. At W&B, we did this at scale and it was one of our highest-ROI growth investments. Those tutorials drove human adoption and built training signal simultaneously. The human growth has plateaued; the training signal is permanent.

Timeline: A few months to payoff. Entirely real. Budget this as infrastructure investment, not marketing spend. The companies that planted these seeds in 2024 are seeing the returns now.

Tier 3 – The Structural Changes

These take months and require sustained organizational commitment. They’re also the hardest to replicate once a competitor has established them.

11. Pursue Orchestrator Partnerships

Cursor, Claude Code, Lovable, Bolt, and Replit collectively set defaults for millions of projects. A product that gets included in a vibe-coding platform’s default stack is in every project that platform creates; at no marginal cost, indefinitely. This is the highest-leverage distribution play in the agent era.

Supabase’s growth from 1M to 4.5M developers was driven primarily by Bolt, Lovable, and Cursor choosing it as the default backend. That wasn’t a marketing deal. It was the result of having the lowest token-to-value for backend infrastructure: the Autonomous Loop Test passed cleanly, the MCP tooling was polished, the Agent Skills were published. Platforms choose defaults based on what causes the fewest support issues for their users. The product work comes first.

When you approach these platforms, don’t come with a pitch deck. Come with data: your First Successful Execution Rate, your First-Session Autonomous Completion Rate, a token-to-value comparison against your alternatives. These teams optimize for user success rates. Show them yours.

12. Move to Workflow-Based Pricing

If you’ve built the flywheel, per-seat pricing will actively work against you. Here’s the sequence:

Kill per-seat pricing for any tier where agents could plausibly be the primary user.

Launch a free tier requiring no credit card that lets an agent complete a real task. Agent-accessible is now a design constraint, not a feature.

Move paid tiers to usage-based or workflow-based pricing that captures agent-driven expansion automatically; without requiring renegotiation when an agent fleet doubles usage in a quarter.

Add hybrid tiers: flat base + usage overage. Enterprise buyers get budget predictability; usage captures the expansion that seat-based pricing leaves on the table.

Intercom Fin at $0.99 per resolved ticket is the reference case: $10M+ revenue in year one, perfectly aligned incentives. You get paid when the agent succeeds.

The structural reason to do this now: when your pricing unit is the workflow and your north star is Weekly Active Workflows, your growth team and your finance team are optimizing the same thing. Every experiment that moves WAW moves revenue. That alignment is rare and worth engineering for.

Where to Start

The flywheel is already spinning for some companies. It’s not spinning yet for most.

The Tier 1 items; llms.txt, MCP server, the Autonomous Loop Test, error message rewrites, doc restructuring, free tier audit; can all be done this week. Each one removes a specific wall that currently makes you invisible or inaccessible to agents. Do them in order. The Autonomous Loop Test first: it’s 30 minutes and will tell you exactly which of the other items to prioritize.

Tier 2 is the compounding work. Tier 3 is the structural work. Both require sustained commitment, and both are significantly harder if a competitor gets there first.

The window that exists right now; where most developer tools have no llms.txt, no MCP server, no Agent Skills, and no agent-accessible free tier; won’t stay open. Build the flywheel while the defaults are still being set.

“It’s 2026. Build. For. Agents.” – Andrej Karpathy

Thank you to Lukas Biewald, James Cham, Amy Tam and Phil Gurbacki for early feedback on this draft.

_________________________

Lavanya Shukla is the Managing Partner of Improbability.vc, an early-stage fund backed by Sequoia, Coatue, Village Global, Bloomberg Beta, Lukas Biewald, Adrien Treuille, and AI leaders at OpenAI, DeepMind, Turing et all.

She spent seven years running Growth and AI at Weights & Biases, scaling it from 100 users to millions, every AI engineer at every major lab, through product-led growth.

Improbability Engine’s thesis: invest in the 1–2 AI companies that matter every year. If you’re building one of them, reach out: lavanya@improbability.vc.